I’ve begun a new writing project related to AI and education on my substack, Ship of Theses. Check it out, if you like. You can learn why flaws are good, why LLMs are bad students, and why it’s the death of the author, all over again.

Heaven

I

Like many Americans of my generation, I learned theology not so much from church but from pop music. Sure, I was exposed to religion here and there. No parents, not even my own, can keep their children safe forever. And Lord knows my folks tried. Mama tried. When I was a kid, Sunday was just Saturday, Part II. Sunday morning meant that the cartoons didn’t start until later, after these men in nice suits finally quit begging me to call their toll-free line.

I once asked my mother who those guys were. She looked me in the eye and said, “Adam, Televangelists are criminals they allow on TV to make money.” I spent several years of my life believing that was literally true. Once or twice a year, Sunday meant we’d trudge to Dan River Church, gathering place for my grandma’s Primitive Baptist congregation. Slouched in creaky wooden benches, I’d let the preaching about the perils of hellfire and heaven’s eternal reward bounce off my ears. The message didn’t sink in. Heaven sounded so boring.

It’s a cliché, I know. But eternal life with my family— my family—that’s the reward for a lifetime of forbearance? What would we do in heaven? Wouldn’t twanging harps get old? I tried from time to time to muster up the holy feeling I imagined everyone else felt at church. But it didn’t take. Turns out it’s difficult to fake that sort of thing. So where does an unchurched child like me go to find divine inspiration? Pop music, that’s where.

II

You can dismiss pop music as superficial if you like. After all, as a genre, pop exists primarily to get your booty shaking. That’s its prime directive. And pop is good at it. It’s also easy to dismiss pop there is a lot of bad pop out there. The worst pop—those overproduced, commercial, autotuned, songs—that were grown in test tubes before being unleashed on the unsuspecting public: those make me vomit in my mouth, just a little. But the best pop songs—the ones that put some thought and artistry into their lyrics, can accomplish much more. A little bit of sugar makes the medicine go down, and a good dance beat can smuggle an interesting idea past your inner TSA agent and deposit it into your subconscious.

That’s what happened to me, and today I want to share with you a piece of the gospel of the Talking Heads.

III

In case you don’t know them, the Talking Heads are easily one of the most important bands of the last fifty years. They helped define the sound of new wave in the 1980s. Their song “Heaven,” imagines heaven as a nightclub. “Everyone is trying,” lead singer and lyricist David Byrne croons, “to get to the bar. The name of the bar, the bar is called heaven.”

Byrne uses two motifs to describe Heaven in this song. In the chorus, he sings, “Heaven… Heaven is a place, a place where nothing, nothing ever happens.” That’s the first. Heaven is a place where nothing ever happens. The second motif is developed in the verses. This time, Byrne describes Heaven as a place where the same things happen over and over and over again. “The band in heaven, they play my favorite song. Play it one more time. Play it all night long.” “When this kiss is over, it will start again. It will not be any different. It will be exactly the same.”

So these two ideas are what we need to hold in our mind at the same time: Heaven is a place where nothing happens; Heaven is a place where the same things happen over and over again. Byrne’s lyrics go a step further, though, and this is the really interesting part. He doesn’t merely rest on this paradox.

Listen to these lines from the chorus:

“It’s hard to imagine,

that nothing at all,

could be so exciting,

could be this much fun.”

That first line, where he says, “it’s hard to imagine”—what does that line do? It acknowledges our skepticism. It nods to the listener. It says, “look, I get it. This is a weird idea… “You’ll experience the same party, the same song, the same kiss, over and over again.It won’t change AND each time, you’ll experience it as new. This is weird. I know.” I think this simple act of acknowledging the listener’s skepticism is thecrucial moment in this song. Because instead of creating an idea for you, instead of giving an idea to you, saying “it’s hard to imagine” invites you to co-create the meaning, to actively, imaginatively participate in joining with Byrne in imaging your version of heaven. Saying “it’s hard to imagine” casts Byrne not as a preacher on the pulpit, disseminating the received Word of God, but as a person like you and me, a person who might find it difficult to imagine heaven.

In this way, Byrne is much more effective in speaking to a skeptic like me than the preachers at Dan River Church. I pulled away from their ardent belief in this version of heaven, that version of hell, the Heaven they sought to give me, not to co-create with me. Byrne was not a Unitarian Universalist, as far as I know, but that message has a distinctly UU flavor, doesn’t it.

IV

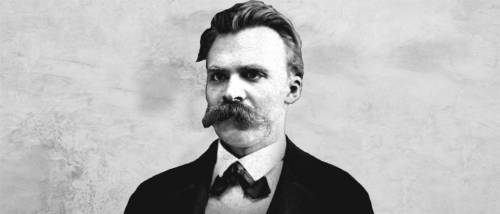

It’s hard to imagine—perhaps very hard to imagine—a more unlikely philosopher to invoke in a sermon about Heaven than Frederic Nietzsche. After all, Nietzsche declared, “God is dead!” He can’t have cared much for Heaven. Few philosophers have expressed more disdain for Judeo-Christian thought than Nietzsche, who dismissed it as mere “slave morality.” Yet there is a major part of his thought that speaks to the thread I’ve been tugging on here.

Nietzsche believed that meaning, purpose, and truth were human constructs, not ordained by God or metaphysics. Even so, he used a metaphysical idea to explain a key component of his philosophy. That’s the idea of eternal return. Eternal return: Many religions throughout history have imagined time as a cycle. Nietzsche, however, is the first non-religious thinker to embrace the idea of eternal return. Here is an excerpt from The Gay Science:

“What if a demon crept after you one day or night in your loneliest solitude and said to you: ‘This life, as you live it now and have lived it, you will have to live again and again, times without number; and there will be nothing new in it, but every pain and every joy and every thought and sigh and all the unspeakably small and great in your life must return to you, and everything in the same series and sequence—and in the same way this spider and this moonlight among the trees, and in the same way this moment and I myself. The eternal hour-glass of existence will be turned again and again—and with it, you dust of dust!’ – Would you not throw yourself down and gnash your teeth and curse the demon who thus spoke?”

Instead of Byrne’s party, Nietzsche casts the decisive moment of eternal return not as pleasure but as a moment of supreme loneliness. A moment of angst, of existential dread. That’s how it begins, at least. Take a moment to consider what Nietzsche is suggesting here: Can you imagine living every moment of your life, in the exact same sequence, from birth to death, not only once, but again and again? How would you react to learning that eternal return is your predicament?

Nietzsche imagines two reactions: One is to despair—“Would you not throw yourself down and gnash your teeth and curse the demon who thus spoke?” But he also posits another, opposite reaction. What, he asks, would your internal state have to be to learn of eternal return and respond to the demon, “You are a god and never did I hear anything more divine!”

Here is Nietzsche again: “Or how well disposed toward yourself and towards life would you have to become to have no greater desire than for this ultimate eternal sanction and seal?”

Nietzsche, did not believe in the literal, metaphysical truth of eternal return. He did not believe that we literally are born, live, die, and repeat the cycle in exactly the same order an infinite number of times. Rather, he used the idea of eternal return like a test. A great human spirit, he thought, said yesto the world. This does not mean abdicating morality. It does not mean turning a blind eye to injustice. Far from it. It means embracing, in a radical way, the responsibility of making meaning out of our experiences. And when I say radical here, I’m thinking of its etymological meaning, which shares the same origin as the word root.

A radical embrace of the responsibility of meaning making is one that begins in your roots, that is personal down to the core of your being. It is not amoral, but grounded in a morality derived from human, not divine, origins. The only way to live so well that you could repeat the exact same life ten thousand times over without regret Is to fully embrace each moment for what it is.

That’s what Nietzsche is getting at when he writes:

“My formula for greatness in a human being is amor fati: that one wants nothing other than what is, not in the future, not in the past, not in all eternity. Not merely to endure that which happens of necessity, still less to dissemble it… but to love it…” He says we must love the world as it is, to love our experiences. But what could he mean by “love” here? We frequently use “love” superficially, so it’s easy to glaze over this word choice. He doesn’t mean “love” in the sense of “I love tacos.” (Actually, there is nothing superficial about my love for tacos.)

Rather, it is that radical kind of love—that transformative love—that is perhaps most difficult to feel with your family, with your children, with your lovers, for it is a love that doesn’t seek to change but to accept the other for who they are, who they truly are, not who we wish them to be. Nietzsche was no Buddhist, but that idea sounds quite Buddhist, doesn’t it? To let go of all illusions and cravings, to let go of desiring for this to be that. Amor fati—love of fate. It’s hard to imagine, but how great would your love have to be to love not only one imperfect lifetime, but an infinity of them?

V

Let’s put these ideas together now. Heaven is a place where nothing happens. Which means Heaven is a place where the same things happen over and over again. And one measure of human greatness is radically accepting things as they are, not as we wish for them to be. And not only tolerating this reality, but loving it so completely that it would be an utter joy to live the same life over again.

Is that Heaven?

VI

Byrne’s song is about the idea of Heaven as eternal pleasure. You’re in a nightclub. The band is playing your favorite song. There is a party. You’re kissing your lover. And when it ends, it all starts again. And somehow, you never get tired of it. Hard to imagine, right? Nietzsche’s scenario begins with existential dread—a demon cursing you to live the same life over and over again—but transforms that dread into a radical, loving embrace of reality. Both examples begin at extremes: extreme pleasure on one hand, extreme suffering on the other. Perhaps a better test, though, is not the extremes but the mundane.

I’m a dad. I live in the suburbs. There are many days when I feel like I’m caught in the plot of a personal Groundhog’s Day.

Wake up a little too early (because every night I somehow manage to stay awake too long).

Feed the kid.

Feed myself.

Cheerios taste the same every day.

Kiss my wife.

Drop the kid off at school.

Commute to campus.

Teach classes I’ve taught before.

Read the same freshman essays I’ve read before.

Commute home.

Eat the leftovers.

Load the dishwasher.

Read Goodnight Moon for the thousandth time.

“Goodnight, bowl of mush!”

Wish the kid, “Sweet dreams.”

Somehow manage to stay awake too long.

Pass out into my bed.

Rinse, repeat.

I tend to think of all these mundane, routine activities as the things I must endure before getting to the good stuff. But what if I’m wrong? I’ve been a parent now for three years. There is nothing, I’ve discovered, like being a parent to make you keenly aware of the power of routine. Everything repeats. My child wants to read the same books over and over again, and he corrects me if I change even a single word.

“No, Dad. No,” he says. “That’s not right.”

In the moments when I wake up to my life, I’ll admit that I’ve often felt Nietzsche’s sense of dread. Can this be all there is? How am I reading the same book yet again? How am I washing the same dishes yet again? Byrne’s song and Nietzsche’s philosophy are powerful because they speak to our lived experience of routine. And we repeat so many things.

We fight the same fights with our lovers again and again. We make the same mistakes over and over. We learn the same lessons time after time. It’s hard to imagine many experiences that are truly unique. Most of life is a repetition of something. Life’s endless repetitions can be soul crushing. They can make you feel like a demon has cursed you to endlessly repeat the same commute over and over, to load the dishwasher again and again, and to read Goodnight Moon, again, for a merciless toddler who demands nothing short of perfection.

But perhaps, perhaps, the key to the kingdom of Heaven is finding a way to love these endless repetitions. When this day is over, it will begin again. It will not be any different. It will be exactly the same. Byrne’s lyrics describe an attitude, a perception, not epistemological fact. So maybe Heaven is a choice. Maybe the key to Heaven is the act of controlling your perception. You choose to despair at life’s endless repetition, or you chose to love it.

Amor fati.

It’s hard to imagine that you could make that choice, that it would be so easy, and so impossibly hard, to enter into Heaven.

Filed under Uncategorized

Vision/Revision

The good news of writing, I often tell my disbelieving students, is the good news of terrible first drafts. All first drafts are terrible. Every one. Kurt Vonnegut, who wrote 27 book-length works of fiction, non-fiction, and drama, said: “When I write, I feel like an armless, legless man with a crayon in his mouth.” First drafts are born as disappointments—and it really can’t be otherwise. It’s not their fault. Everyone who has engaged in any creative practice, from cooking to clogging, knows this is true. Your first attempt is usually not just bad, but awful. And that’s okay.

Terrible first drafts are the good news of writing because a) they are the great equalizer: all first drafts are terrible, and b) it means drafts usually get better. Even though I earn my paycheck by teaching people how to write more gooder, I don’t actually enjoy the process of writing all that much. Writing makes me feel like an armless, legless man with a broken crayon in his mouth. I love, however, to have written—because then, once I’ve got something, anything, on paper, then I have something to tinker with. Then I have something to disassemble and put back together. Then I have something to revise.

To revise means, literally, to re-envision. To reimagine the draft from the ground up, to chop and hack and cut and rearrange the pieces into a more effective or more pleasing order: some of my fellow composition teachers have brought scissors to class and actually had their students cut their papers up into pieces so they can play around with different arrangements. (Though I have not been brave enough to try this in my classroom yet, I admire their dedication to the pedagogy of creative destruction.)

I find in revision a pretty good metaphor for thinking about anything that involves some sort of craft or iterative practice—that is, anything we do again and again and again with the hope of improving, not by leaps and bounds, but by slow, methodical steps. If there is something in your life that you pay attention to and attempt to improve, then you are revising. When you join or leave a community, you revise that community. Today revises yesterday just as tomorrow will revise today.

If revision had a spirit guide, it would have to be, I think, Walt Whitman, the poet who revised a single book of poetry, titled Leaves of Grass, through four editions over the course of about 37 years. The first edition, in 1855, contained 12 poems. The last edition (the so-called “deathbed” edition), published in 1892, contained over 400, and the two editions in-between those contain revisions of the original poems. (These multiple editions are a great boon for literary critics like me, for they give us endless hairs to split and thus ensure job security.)

I look to Whitman to assuage one of the fears of revision, namely the fear of revising to the point of losing the essence of the original. Which version of Leaves of Grass is the correct version? Is it the original vision he had as a young man, or the culmination of nearly 40 years of practice? Or is it one of the two middle editions, published when Whitman was at the height of his creative powers? Whitman lets us off the hook for that question toward the end of “Song of Myself,” when he pauses to declare: “Do I contradict myself? Very well then, I contradict myself. I am large. I contain multitudes.” That is, we don’t need to go full “ship of Theseus” here

The truth is, I think, there is no vision without revision—or, to say it more accurately, all new visions are already revisions, for as I read in a good book somewhere, there is no new thing under the moon. Nothing is totally unprecedented, and even the boldest, most “original” visions are themselves revisions of what has come before. Karl Marx’s dream of a communist utopia is a revision of capitalist society. Whitman’s speaker, the singular “I” that somehow contains a plurality of “multitudes,” is a revision of the Cartesian “cogito.” Revision is good news because it frees us from the expectation of originality, opening the gates to the kingdom of play and experimentation. So let us be large, let us contain multitudes, let us envision and re-envision.

Filed under Uncategorized

Words that Heal

“Words that Heal”

Here is a slightly embarrassing story about me that my mother likes to tell: when I was two years old, she took me in to see our family doctor. While doing his routine checkup, he asked her if I was putting together two word phrases, like “Milk please.” Before she could answer, apparently, I asked the doctor, “Can I see your stethoscope?”

Words have always mattered a great deal to me, which is why, in some respects, it seems natural, possibly inevitable, that I majored in English and later became an English professor. Words get stuck in my head, sometimes just because I like their sound. It’s like having a popcorn kernel stuck in your teeth. My mind plays that word over and over and over again, tracing all of its little bumps and ridges. Stethoscope. STETH o scope.

Here is another slightly embarrassing fact—related to the first—about me: some of my earliest memories are of worrying incessantly.

I come from a great family of worriers. My mother worries, my grandmother worries. We’re good worrying stock. As a young child, I thought it was normal to lay awake for hours at night, gripped with fear about things that were far beyond one’s control. I recall, for instance, learning about an invasive species of snake somewhere in the Pacific—let’s say Guam. What were they going to do about all those snakes? They were killing all the birds! This fear wasn’t the idle sort, the kind that is really more akin to curiosity than true fear. What I felt about those snakes in Guam is the gut-twisting, bowel-punching fear that, evolutionarily speaking, is supposed to make you jump back from the rattlesnake hissing at your feet.

My natural aptitude for worrying went through something like boot camp in my early adolescence, when a string of bullies made me begin to hate—to hate my body, to hate my ethnicity, to hate most of all my weakness, my inability to stand up for myself. By then, I knew it wasn’t normal to curl up into a ball in the back of the school bus and spend the entire trip praying no one would speak to me; even worse, I had allowed myself to believe that somehow I deserved it, that it was my fault. The words that got stuck in my head then were not good words. Not chocolate words to roll around on your tongue, but acrid, astringent words that choked my throat as I swallowed them down.

If you’ve never had the particular pleasure of anxiety—which is the term a string of therapists I eventually turned to insisted on using, no matter how hard I argued against them—it’s like having Donald Trump’s Twitter feed running on repeat in your brain, spewing poisonous, terrifying words—way worse than “crooked Hilary.” Like a Trumpian tirade, anxiety is also based on some set of “alternative facts” that have only the most tenuous grasp on reality. The worst thing is that you believe it.

Words were a symptom of my illness, and they were equally a sign of my cure. First through poetry, where I rekindled my love of language, spending hours obsessively writing and rewriting each poem—no joke, 30, 40 drafts easy—before it was right. I eventually worked up the courage to read my poetry in front of people, and late night coffee houses became my first church, my first glimmer of psychic salvation. People said they liked my poems, and I secretly started to believe it might be possible for me to like myself. Later, in college, a beautiful girl said she loved me—she used those words!—and I loved her, and I told her, too, and years later we said two other short, powerful words to each other—“I do”—and those words healed me, too, more than any poem.

Filed under Uncategorized

Dropping Ashes on the Chalice

Dropping Ashes on the Chalice

Adam Fajardo

Service for UUMAN, 7/8/18

Let’s begin with a story—more of a question, really—that Korean Zen master Seung Sahn was fond of asking his students.

Suppose that a man were to walk into this sanctuary, light a cigarette, and start using the chalice as an ashtray. What would you do? Would you stop him? Would you allow him to continue? Why or why not? Take a moment to decide, then, if you feel like it, share your response with someone sitting near you.

~~chime~~

Here is how Seung Sahn, the Zen master I just mentioned, would respond:

If you said you’d stop the man, you’re wrong. Seung Sahn would threaten to beat you with a stick for that answer.

If you said you wouldn’t stop him… you’re also wrong. Seung Sahn would threaten to beat you with a stick for that answer.

If you said, “the sun is shining and the breeze is rustling the leaves,” then you’re on the right track, and you might escape a beating.

Let’s see if we can unpack this paradox.

I encountered this question in a book called Dropping Ashes on the Buddha, a collection of Seung Sahn’s teachings drawn from dharma talks, letters, interviews, and personal anecdotes. In his version, the man blows smoke and drops ashes on a Buddha, not a chalice, but the point is the same, and the point, as you’ve probably guessed, has little to do with either Buddha statues or chalices. The point he’s making is about attachment, which is a particularly important concept for Buddhists, and one we can learn much from, too, whether we call ourselves Buddhists or not.

Here is some context. The problem that Buddhism sets out to solve is the problem of suffering—this is true of Buddhism in the same way that we could say Christianity sets out to solve the problem of sin. Why do we suffer in life? Is suffering necessary? Buddhist philosophy investigates this phenomenon and offers a path out of suffering.

Buddhist teachings, called dharma, hold that we suffer because of our attachments. Clinging to the past, or to ideas about how things ought to be, rather than experiencing things as they are, is the cause of suffering. So if it pains or offends us to see someone flick cigarette ash into the chalice, that’s because we’re clinging to an idea about the chalice as a sacred object or symbol of our identity. We haven’t yet grasped that all things are interrelated, that a cigarette and a chalice are not so different.

The Sanscrit word for this kind of suffering—this desperate clinging to ideas, perceptions, memories—is dukkha. Dukkha implies thirst, an insatiable thirst. Imagine working all day outside in the hot Georgia sun and trying to quench your thirst with salt water. That’s dukkha. No matter how much you drink, your thirst does not, cannot, ever cease. So for Seung Sahn, stopping the man is a sign that the student has not yet let go of attachments.

A major symptom of living in a state of dukkha is reliance on conceptual thinking—any sort of thinking really—because our concepts about things get in the way of experiencing them directly, like looking at the world through tinted sunglasses. Thich Naht Hanh, another Buddhist teacher, describes going on a walk with a young girl. She points to some flowers and asks him what color they are. Aware that she is young enough to just see the colors without a concept like “red” getting in the way of the experience, he responds, “They are the color you see.” I think this is something we all feel from time to time, sometimes acutely, and we often become aware of it as when the labels we adopt for ourselves or that are placed on us become constricting. Girl, sister, woman, lover, wife, mother. Gay, lesbian, straight, queer, bi, trans, asexual. White, black, Latino, Eskimo, and on and on, and on… while these labels help us orient ourselves to the world, they also stand between us and the world, between me and you. They make it difficult to see ourselves or each other as simply human.

~~chime~~

Okay, but what about not stopping the man? He said that was also a wrong answer. This layer takes a bit more thought to unpack. Imagine that you are a young Buddhist monk or nun, earnestly trying to understand, practice, and live the dharma. Remember that in most Buddhist traditions, monks and nuns give up most material possessions, shave their heads, eat simple food—sometimes only eat what they are given by the community outside their monastery—practice celibacy, and practice meditation, all of which cultivate a mindset and lifestyle based around nonattachment. If you were living in that environment, aspiring toward living in the present moment and not being overly attached to ideas, you might start to venerate, even possibly enjoy the feeling of nonattachment and to relish signs that you were moving away from attachments—which is to say, you might become attached… to nonattachment.

For Seung Sahn, the man dropping ashes on the Buddha represents someone who has become attached to nonattachment. He has gone so far in his spiritual progress that he no longer recognizes the difference between ash and Buddha, but this itself is a sneaky form of attachment—I think of it as a cousin to nihilism, the belief that nothing truly matters, in the end, because there is no God or higher purpose to life, and anyway the sun is going to go out someday, leaving this whole planet a cold, lifeless hunk of rock spinning quietly through space, so why bother with anything? That’s attachment to nonattachment.

Here is how Seung Sahn explains the point of his story:

“This person has understood that nothing is holy or unholy. All things in the universe are one, and that one is himself. So everything is permitted. Ashes are Buddha; Buddha is ashes. The cigarette flicks. The ashes drop.

But his understanding is only partial. He has not yet understood that all things are just as they are. Holy is holy; unholy is unholy. Ashes are ashes; Buddha is Buddha.”

In a way, the spiritual progress he is describing is a kind of circle. We start out attached to words, ideas, and concepts. With work and better understanding, that attachment loosens, and we may become instead attached to emptiness, to nonattachment. The point, however, is to return from that place and to see things, again, as they truly are. The chalice is a chalice, the cigarette a cigarette.

~~chime~~

What’s tricky about this question, I think, is that it lays an ethical question over the spiritual one, forcing us to think about what we should do about the man, not about the state of our spiritual progress. What strikes me in Seung Sahn’s response is the generosity and caring he exhibits. It’s almost as if he doesn’t consider the answer most people, including me, would give—that the man is an inconsiderate jerk. Instead, he assumes the best about him, thinking that he has let go of some attachments, but is now woefully attached to emptiness.

Here is another variation:

He says: “Here is a bell.

If you say it is a bell, you are attached to name and form.

If you say it is not a bell, you are attached to emptiness.

Is it a bell or not?”

Seung Sahn gives four answers to this question. The first three are “good” answers.

- Only hit the floor.

- Say, “The bell is laughing.”

- Say, “Outside it is dark, inside it is light.”

- Pick up the bell and ring it. This one is the only complete answer.

Two of these answers use words, and two are actions. The two statements, “The bell is laughing,” and “Outside it is dark, inside it is light,” seem to have no relationship to the question; their purpose is not to answer it but to bend the rules and expectations of language to get the student to stop thinking for a moment. The other two, hitting the floor and ringing the bell, also are meant to shock the questioner out of thinking. And it’s that moment of nonthought that all these puzzles and word games are trying to achieve. Not thinking, just being. When Zen masters strike their students or scream KATZ at the top of their lungs, as they frequently do in the stories he tells, the point is to provoke that moment of nonthinking.

~~chime~~

This talk has been too abstract, so let’s ground these questions in something concrete.

I am, among other things, a classic Fox News boogie man: a liberal professor at a four-year college, one far more inclined toward Karl Marx than Ayn Rand. Though I do try to keep my personal politics out of the classroom, my perceptive students can glance, say, at the many black, brown, female, and gay authors on my American literature survey syllabus and correctly deduce my leanings well before we discuss Mina Loy’s “Feminist Manifesto” or Allen Ginsberg’s “Howl.” Those are some of my labels, and there is something seductive about them—something that makes them easy to attach onto. If I were more important than I am, Fox News might target me with claims that I was brainwashing America’s youth with liberal propaganda, which to me is, I must admit, a rather more romantic and appealing story about me than what I really do, which I read the same freshman essay over and over until my eyes, in pure self-defense, cut their ligaments and drop out of my head.

A few months after I finished reading Dropping Ashes on the Buddha, the Unite the Right rally in Charlottesville happened, and I watched aghast as white supremacists marched through the streets protesting the removal of Confederate monuments. A few months after Charlottesville, I began a new semester of teaching. The first thing I noticed about one of my new students, who I’ll call Steven, was that his beard stretched down nearly past his shoulders. The second thing I noticed was that he had a big tattoo of a Confederate flag on forearm. With Charlottesville on my mind, I was immediately worried. This was a small night class, with a pretty even split between men and women, and a few more brown faces in the room than white ones. As a first-year writing course, we have less overtly political material to cover than in my literature courses, but we do end up talking about issues of race, class, and immigration over the course of the semester.

So, let’s flip the question around: A man walks into my college classroom—or into the UUMAN sanctuary—with a confederate flag tattooed on his arm. What do I do?

If I confront the tattoo, I am attached to name and form.

If I don’t confront it, I am attached to emptiness, to nihilism. Both ways, I’m wrong.

A couple weeks went by, and the more I got to know Steven, the more I liked him. Nothing in his behavior or attitude caused me the slightest concern. Yet there was the tattoo. One day, we were discussing an essay about how the rapper Tupac Shakur helped a biracial girl embrace her black side, something she couldn’t do before discovering his music. I came to class mildly worried about how the discussion would go. Steven, though, not only listened respectfully to his classmates, but he also spoke about race and class with a degree of sensitivity and insight that I honestly have not encountered in any other college freshman.

In discussions we had both in and outside of class, I later learned that he had served in the Army for many years before leaving to return to college. During his time in the Army, he had taken a series of seminars on racial and gender discrimination. These classes, he said, opened his eyes to the fact that other people had lived experiences vastly different from his own, and he’d so taken them to heart that he went on to become a trainer for these seminars.

I don’t know the story behind his tattoo—maybe he got it as an 18-year-old and grew to regret it. What I do know is that if I had reacted to the tattoo before getting to know him, our relationship would have suffered, and I might not have gotten to see the real Steven. As a self-identified conservative student, Steven, too, might have just seen me for my label, as the liberal propagandist professor that Fox News warned him about. I’m not saying that I was particularly wise in this situation. I think rather that I was very lucky—there are not many Stevens in this world, and one happened to pass through my classroom. I just didn’t screw it up. In these moments with him, I could feel my mind pulled in two directions. On the one hand, there was the tattoo, with all the negativity it symbolized to me. On the other hand, if I could consciously set down those associations and just focus on the present moment, everything was fine—more than fine, in fact, for he was unfailingly considerate and conscientious.

~~chime~~

My two-year-old son has reached that stage where the question he most frequently asks is, “What that?” He delights in learning all the names for things, and I enjoy telling him, too. He is entering the world of concepts—“mine” is another new favorite—with all of the joy and suffering they bring. Later, I’m told, we’ll reach the “why” stage, just to make things more complicated. For now, though, I occasionally try to channel some of Seung Sahn’s spirit when he asks me “What that?” sometimes just handing him the thing he’s curious about, other times popping a morsel of food in his mouth instead of saying “tomato.”

Lately, I’ve begun thinking of “What that?” as a useful question for me to ask, too. The Zen mind is a child’s mind, after all, so I’ve been making a practice of asking myself, “What that?” I think I know what a chalice is and what a cigarette is, but looking at a familiar object, idea, or situation and asking myself, “What that?”, slows the busy pace of my mind and, sometimes, puts me back into a state where I can see something without being so wrapped up in thoughts.

Attachment, nonattachment. “What that?” Ring the bell. It’s Sunday and you are here.

~~chime~~

Filed under Uncategorized

On (Not Being) Moved by the Spirit

WA Reflection for 2/25/18

In 2003, when I was a junior in college, I got to spend a semester studying at Oxford University in England. The walk from the house that I shared with three other American undergrads to New College, where I met with my professors, took me down St. Giles’s Street past two significant landmarks: the Oxford Quaker Meeting House and The Eagle and Child, which is the pub where C.S. Lewis and J.R.R. Tolkien would meet with other writers to eat, drink, and read unfinished drafts of their work.

I’ll admit that I visited the pub before stepping foot into the Quaker Meeting House, but from the start I was intrigued by the Quakers. I had read about their radical history, their pacifist reputation, and the egalitarian structure of their worship—with no official clergy or minister, on Sundays the Quaker society of “Friends” sits in simple benches arranged in a circle around the room. Quakerism holds that each individual has a direct relationship with the divine, with God, and so their “service” consists of a meditative silence punctuated by congregation members who speak “when the spirit moves them to speak.”

Up to this point in my life, I had attended church only when visiting my grandparents for extended periods, and even then, my family tried to weasel out of it. When asked about my religion or faith, I would identify as an “optimistic agnostic”—meaning that I had no idea if there was or wasn’t a God, but that I hoped for the best.

Before the first Quaker service I attended, the friends welcomed me, explained the basics of their theology and worship practices, and even invited me to speak during their meeting—when the spirit moved me to do so.

“When the spirit moved me to speak?” What did that mean? How would I know? Would God whisper in my ear, and if he did, would it be the full text, or crib notes? Or would it be subtle? Would the ether begin to hum?

I was mostly drawn to Quaker meeting through the allure of sitting in silence with other people. (This same impulse lead me to Buddhism later in life.) But I went to each Sunday’s meeting alive to the possibility that, sometime, the spirit might move me to speak. Sitting in silence with the congregation, I contemplated what it would mean for the spirit to move me to speak. I was 20 years old, so I knew just about everything, yet I somehow intuited that the intent of this spiritual practice was not to for me explain things to other people.

Truthfully, though several people would speak at each meeting, I don’t remember a single thing any of them said. But I do remember how they spoke. One woman, in particular, remains in my memory. Rising from the pew, her eyes half open, curly brown hair falling at her shoulders, she swayed gently back and forth and. Spoke. One. Word. At. A. Time. As though tugging at a thread. no one. could see. It reminded me of improvised jazz, but slower, halting, and without the safety net of an established rhythm or melody.

For three months, I sat in the meeting house and waited for the spirit to move me to speak. I never spoke. Whether I was not touched by the divine, or just shy, I can’t say. I wanted to speak, but the time never felt right. The call didn’t come.

In fact, not feeling called may have been a more important spiritual lesson, for the most profound moments of service occurred when I could hear nothing but the soft breath of the congregation. And this is fitting, because the Latin root of the word “spirit” is spirare, meaning “breathe.” And it was while sitting in the meeting house, a 19th century building of wood and stone, that I first learned that just breathing can be a spiritual practice—one that takes a lifetime to master at that.

Filed under Uncategorized

Excess and Contradiction

Excess and Contradiction

Sermon for UUMAN, New Year’s Eve, 2017

Good morning, and happy new year. I want to begin this new year’s message in an unlikely place with a poem by Edna St. Vincent Millay. If you don’t know Millay, do yourself a favor and look her up one day when you’re killing time online. She’s more interesting than Facebook, I promise.

Nowadays, if you hear someone is a poet, the image that probably pops to mind is a shabby, starving artist type whose “real job” probably has more to do with pulling espresso shots than weaving rhyme schemes. Or, maybe, more generously, we might imagine an earnest MFA student scraping along by teaching freshman comp and creative writing at community college.

But Millay is different. In her time, the 1910s and 20s, Millay’s poetry made her a bona fide celebrity, an “it girl.” She was an early bohemian and modernist who lived in Manhattan and contributed to a burgeoning subculture that embraced feminism, free love, non-normative gender roles, and art for art’s sake—all a full 40 years before the beatniks and hippies would take up similar causes.

Here is the first poem from her most famous book, titled A Few Figs from Thistles, which you can also find printed in your order of worship.

“First Fig”

MY candle burns at both ends;

It will not last the night;

But ah, my foes, and oh, my friends–

It gives a lovely light!

Here, the speaker uses the image of a candle lit at both ends, a stock image or cliché, to describe living with bright, hot intensity. In this context, she is referring specifically to pursuing passionate love and bodily pleasures. And yet, as Millay is aware, this is not a sustainable way to live. Her candle “will not last the night.” She’s going to burn out, and she knows it.

But—and this is a crucial “but”—she then pivots. First, she addresses her “foes,” reminding us that acts of beauty, creativity, and pleasure can also be acts of resistance. Then she addresses her friends and insists that they also acknowledge it’s “lovely light.”

I love this little poem. It’s hard not to. If you think about it, her message is pure rock n’ roll, right? Burn bight, flame out early, but create a moment of intense beauty as you do. This is Jimi Hendrix before Jimi Hendrix was Jimi Hendrix. Or, to adapt Willard Motely, this is “living fast, dying young, and leaving a good-looking corpse” behind when you go.

Millay continues this defiant tone in the book’s second poem, which is also in your order of worship.

“Second Fig”

SAFE upon the solid rock the ugly houses stand:

Come and see my shining palace built upon the sand!

Millay continues her defiance when she points to the “ugly” houses built on solid rock. I imagine Millay might consider my own suburban house one of these “ugly houses. In the second line, Millay again pivots, but here she invites us to come join her in appreciating the luminous castle built upon sand and waiting to be washed away. These ugly houses and shining palaces are, of course, metaphors for all creative works, but the message is the same.

And the rest of the book continues in the same vein of celebrating temporary, fleeting beauty and brief, intense pleasures: Wednesday’s passionate love that fades away, without any drama or regret, by Thursday.

For Millay, celebrating intense and unsustainable beauty was her way of thumbing her nose at prudish Victorian traditions. The Victorian era, generally speaking, highly valued propriety and austerity. Women’s dresses covered everything below the chin and above the ankle—but don’t worry, their shoes covered everything below the ankle. The rising middle class did everything in their power to bring “respectability” to the working class. Social and gender roles were clearly prescribed. Millay and her ilk burst on the scene as the Victorian era was slowly dying, causing massive scandals and creating incredible art. (The two are rarely separable.)

These are clearly not poems about Christmas, or Hanukkah, or Kwanza, or even New Year’s Eve. Nevertheless, I think these poems have something to teach us about this time of year.

The holiday season—which, if charted by my own average consumption of sugar, fat, and adult beverages, stretches from the end of October to January 1st—is also a season of intense and unsustainable indulgence in the pleasurable things in life. The winter holidays are when, among other traditions, we eat the best foods and drink the best drinks and spend money freely—often to excess—and generally are much more inclined to cast away out concerns for tomorrow.

When I read Millay, as when I listen to Iggy Pop or Jimi Hendrix, I fall in love with the idea of living for today, and according to my waist line, that attitude extends to my holiday plate as well. Yes, I think, that is the good life—burn the candle at both ends and grasp that brief moment of perfect, transcendent satisfaction.

Yet I quickly find that chasing after these fleeting pleasures is, frankly, exhausting. As much as I want to live in the shining palace built upon the sand, I also want to retreat to my safe, boring, “ugly” house built on solid rock. And I equally find myself drawn to the austere, stoic ideals. Like a Victorian, I think yes, the good life is mastering your desires and not giving in to empty temptations.

Now, a psychologist might look at my predicament and call it “cognitive dissonance.” Cognitive dissonance is psychology’s term for the discomfort that comes from holding two contradictory beliefs in mind at the same time—like a smoker who knows that smoking causes lung cancer but continues to smoke, or a poor person who votes for the politician vowing to cut welfare.

Our culture, it seems to me, has an advanced case of cognitive dissonance when it comes to the holidays and over consumption.

On the one hand, we’re encouraged by friends, family, media, multinational corporations, and perhaps our own natural inclinations to go back for that second (or third) helping of cookies, or yet another glass of egg nog. Whether from nature or nurture, it seems right to pack on a few pounds come winter time.

On the other hand, we’re simultaneously afraid of overdoing it—or at least I am. Some of these anxieties are born out of real health concerns. We know the dangers of consuming sugar and alcohol excessively. Additionally, we in the United States have inherited many of the Puritan attitudes that saw indulging in pleasure as sinful. The original sin—eating the forbidden apple of knowledge—was a sin of overreaching appetite.

These are not new problems, of course. In fact, whole schools of philosophy have grown up to deal with these issues.

One name commonly evoked to justify over consumption is Epicurus, the ancient Greek philosopher whose name is the origin of Epicureanism. You may have seen issues of Epicurean, a magazine devoted to fine cooking and dining, in the grocery store checkout line, or Epicurious, a website for aspiring gourmets. Most people understand the philosophy of Epicureanism as about maximizing pleasure, especially the pleasure of eating and drinking, and as an adjective the word “Epicurean” denotes someone who is especially fond of luxurious consumption—so when we splurge on that prime rib roast, or throw an extra nob of butter into the skillet, or fill our glasses with another splash of punch, we’re all being little Epicureans. Just like when I read Millay’s injunction to live with fiery passion, I too feel a strong draw toward Epicurean delights. For instance, I’ve spent far more time than I’d ever admit studying—literally, studying—the techniques of great pizza making. (Aside: your dough must rise for at least 18 hours, don’t rush the proofing, slice toppings thin, super-hot oven.)

For Millay, as we saw a moment ago, embracing temporary beauty or pleasure was an act of defiance. She advocated bright, fiery passion in defiance of Victorian austerity. But is there anything defiant about eating more than you should during the holidays?

Maybe. The line that sprang to me when I was lying awake one night pondering this problem was the injunction to “Eat, drink, and be merry, for tomorrow you may be dead.” The origin of this phrase is Biblical. In the Biblical context, this phrase was used to call out the hypocrisy of people who pursued wordily pleasure at the expense of their souls—that’s how St. Paul uses it. and its sentiment has been evoked in literature for more than a millennium. The Roman poet Horace, for instance, coined the phrase carpe diem in a poem advising people to make the most of today, since we can’t know what the future has in store. You’ve probably heard “carpe diem” translated as “seize the day,” but according to one source I consulted, “carpe” means “to pluck”—as in to pluck a fruit when it is ripe, and I like how “plucking” involves a much more visceral sense than the militaristic “seizing.” The moment, this moment, now, is a ripe fruit for you to pluck.

In the English Renaissance, carpe diem poems became a whole subgenre of poetry. The poet Robert Herrick, for example, famously advised the young:

Gather ye rosebuds while ye may,

Old Time is still a-flying;

And this same flower that smiles today

To-morrow will be dying.

An even starker and wonderfully morbid illustration of this idea comes from ancient Egypt. A Roman historian, Herodotus, describes the Egyptian feasting rituals, which were elaborate affairs in wealthy households. Remember, Egypt at that time was a major world power, so a feast at a wealthy Egyptian house would be like New Year’s Eve at the Kardashian’s home—or so I imagine. Unlike the Kardashians—again, I assume—one aspect of Egyptian feasts is what has been called the “skeleton at the feast.” At the end of Egyptian feasts would end, according to Herodotus, a man would enter the room carrying a replica corpse or skeleton that was designed to look as realistic as possible. He would carry this corpse from guest to guest, saying to each “look on this, and drink, and be merry, for when thou art dead, such shalt thou be.” (19th cen. translation of Herodotus).

So in a way, maybe there is something defiant about how we celebrate the holidays by with over indulgence. Maybe, as these ancient sources suggest, excessive eating and drinking helps us feel extra alive and thus defies the only true inevitable—death.

But even if that is true, it’s no way to live, at least not long term. A candle that burns at both ends will not last the night. Despite his reputation, Epicurus actually cautioned against overconsumption. Yes, he did think that the way to a good life was to maximize pleasure and minimize pain, but he also recognized that too much overindulgence leads to pain, as I’m sure the majority the over-21 crowd has experienced on January 1st at least once. Ironically, in fact, what Epicurus actually advocated wouldn’t fit well within Epicurean magazine. What we today call Epicureanism might be better labeled hedonism—the pursuit of pleasure above all else. Epicurus, though, does not argue that we should only pursue pleasure and avoid pain. Rather, he advises us to work to attain a state of being he called ataraxia.

Ataraxia is usually translated as being tranquil or unperturbed. In a state of ataraxia, there would be no need to wheel out a skeleton at the end of a feast, because there would be no feast. What Epicurus actually taught is that, when we free ourselves from the unnecessary pain caused by our minds, we need very little to be satisfied—simple food shared with friends in a peaceful setting is pleasure enough. He is very Buddhist, in fact: pain causes us to seek pleasure, but overindulgence in pleasure causes more pain.

I’ve wrestled with the cognitive dissonance of indulgence and austerity for a long time, and the closest I’ve come to finding an answer is by thinking of it as seasonal. To every thing, there is a season. Millay’s season of indulgence and fun followed the Victorian season of propriety and restraint. So if you feel the holidays have been excessive, as mine certainly have, consider following the advice of the stoic philosopher Seneca, who encouraged a close friend to “vaccinate” himself against hardship by practicing austerity. Set aside a certain number of days, Seneca wrote, during which you eat meager, coarse food, all the while asking what it was about this condition that you feared. “Endure all this for three or four days at a time, sometimes for more, so that it may be a test of yourself instead of a mere hobby. Then, I assure you… you will leap for joy when filled with a pennyworth of food, and you will understand that a man’s peace of mind does not depend upon Fortune; for, even when angry she grants enough for our needs.”

And if you’ve planned a New Year’s Eve party for tonight, consider inviting a skeleton.

Thank you for listening, and happy new year.

Postscript:

Several people asked me about Millay after the service. If you’d like to read A Few Figs from Thistles, you can find a first edition online here for free: http://digital.library.upenn.edu/women/millay/figs/figs.html

Filed under Uncategorized

Just Don’t Call Me Late for Dinner

A couple of weeks ago, one of my composition students asked me why I prefer to be called “Adam” rather than “Dr. Fajardo” in class. The question took me off guard, and I didn’t give her a great answer, but, in some respects, being taken off guard is exactly why I ask my students to call me by my first name. This student was asking because she had accidentally called another professor by their first name. That professor had scolded her, saying something along the lines of, “It took me years to earn this degree, so you need to show me the respect that it entails.” I told her that I go by “Adam” because the norm at my undergraduate school was to be on a first name basis with all of your professors. I valued that aspect of my college’s educational culture because it made the faculty feel much more open, available, and approachable, and I want my students to have a similar experience. That’s what I told her, but the question stuck with me.

Like my student’s other professor, I also want respect, but I’m somewhat suspicious of the respect that is automatically endowed through certifications, degrees, and prestige. This isn’t just an antiauthoritarian remnant from my short-lived punk phase. There is a real danger, I think, that comes from being at the top of your field. (People with terminal degrees, in my view, are basically at the top of their fields because, while there is always someone who can publish vast quantities, write a more field changing book, or land a huge grant, we are the 1%-ers relative to the general population.) The danger is complacency. Recently, I listened to an interview with a chess prodigy and competitive martial artist who described how many martial arts champions go on to start their own schools. In their schools, however, they often train very little with their students because they are scared to lose. When they do train, they’ll rely on the familiar tricks and techniques that helped them win before, which means that the masters aren’t regularly challenging themselves to keep growing. I think a similar phenomenon can happen in higher education. For academic, being right is similar to winning a sparing match. It’s good to be knowledgeable, of course, but I think we should be wary of complacency if the goal is to remain sharp and present to students.

None of this is to say that I’m not proud of my degree or that I question other professors who insist on having students address them by their titles. I learned first hand in my undergraduate college that being on a first-name basis with a teacher does not guarantee that person will be approachable or will allow you to ask challenging questions. I could certainly go by “Dr. Fajardo” and still cultivate an open, engaged relationship with my students. Also, going by my first name does not by any means remove the hierarchy that exists between my students and me. (And I don’t want that hierarchy to go away, either—if a student were doing something dangerous or harmful, I would not hesitate to use the full power of that hierarchy to correct the situation.) I just believe that empowering students to ask hard, insightful questions is healthy for the student and the faculty member alike.

The opening of Chris Palmer’s “Reflections on Teaching: Mistakes I’ve Made” reminded me of a Zen saying popularized by Shuryu Suzuki in Zen Mind, Beginner’s Mind. Palmer admits that, when he began a second career as a teacher, he did not have a “teaching philosophy beyond some vague, unarticulated feeling that [he] wanted [his] students to do well.” While Palmer uses this unprepared feeling to frame a discussion of how he began to make a lot of mistakes as a teacher, it also perfectly illustrates the power of what Suzuki might call shoshin, or “beginner’s mind.” The power of “beginner’s mind,” Suzuki explains, is that “In the beginner’s mind there are many possibilities,” while in the “expert’s mind there are few.” “Beginner’s mind” names a state of awareness and attention that is tuned in to the present situation (and thus not encumbered by habit, preconceptions, or the desire to be perceived as knowledgeable). Palmer is able to turn around his inital self-doubts by asking lots of questions and continually assessing (in a much more systematic way than I ever have), what was and was not effective in his teaching practice. This seems to me a natural and healthy outcome of a pedagogical beginner’s mind: he didn’t come into the classroom armed with theories and “best practices” that should work. Instead, he tried lots of things, failed often, and course corrected along the way. I have been teaching composition and literature for about eight years—not so long in the big scheme, but more than long enough to become mired in habits that might limit the amount of learning that can happen in my classroom or could keep me from fully engaging with my students. Though I wasn’t thinking in these terms at the time, I can see that I made certain pedagogical choices this year that encouraged me to adopt a “beginner’s mind” in my classes. Going by my first name at GGC, when the norm seems to be to go by title, is one of those choices.

Filed under Uncategorized

Getting out of the way.

Last week there was a day on my composition syllabus dedicated to teaching summary and paraphrase. These are two skills that are fundamental to a lot of the learning that we’ll do this semester, so it’s important to get them right. But let’s face it–as important as it is to be able to summarize and paraphrase well, talking about these skills can be pretty boring.

In the past, my lesson plan for teaching summary would go something like this: spend some time going over the textbook then work on an activity that asked student to apply their new knowledge. Sounds fine, right? Only, it wasn’t. Inevitably, we’d get bogged down at the textbook phase. The problem was mismatched incentives: I felt responsible for conveying content, so even though I said we’d move through the book quickly, I’d get caught up in the particulars. This self-imposed sense of due diligence clashed with my students’ incentives. There was no real challenge in what I’d asked them to do, and it certainly wasn’t fun, so why would they put in extra effort just to be rewarded with more work?

This time around, I wanted to try something different, but how to make sure that my students both understood the content and had time for real, hands-on practice? My classrooms this semester all have laptop carts in them, so I designed an experiment. Before class, I created a new forum called the “1101 Writer’s Toolbox” in the Discussions page of our Brightspace site. Inside the forum, I made a couple of new topics. The first, simply titled “Summary Vs. Paraphrase,” prompted students to write an 10 sentence explaiaton of the difference between the two skills. I didn’t bother asking for a definition of each since they’d end up doing that while explaining the differences. The prompt also asked students to consider things like how much of their opinion should go into each, how long each should be relative to the original text, and so on. Working in small groups, my class had 15 minutes to write their explanation and post it to Brightspace. To raise the stakes somewhat, I said we’d be reading each response aloud and voting on the best one.

Then I took a step back and let them get to work. At first the class was quiet. They puzzled over the textbook for a minute and asked each other and me a few clarifying questions. But in less than five minutes a pleasant buzz filled the room as they started talking about the concepts and debating which wording to use. I moved around the room, monitoring their progress and reading over their shoulders, but for the most part I just kept out of their way.

When the time came to read their responses, I was surprised and pleased by how good they were. In fact, there was only one concept that none of the groups had mentioned, which was the idea that summaries also need to show the logical connections between the main points. Taking a moment to mention this point to them helped me transition into our final short activity.

For the last 20 minutes of class, I showed my students a short animated film called “Death Sails” and asked them to write individual summaries of it that would also be posed to the Brightspace discussion thread. Before they began writing, I nudged them to include not just a “laundry list” of events, but also why events turn out as they do–that is, to point out the logical connections between events. This final activity also went well, and I was pleased to see them connect the dots with causal phrases.

An added benefit of this activity is that it substantially increased the number of iterations of the main ideas. Iteration increases retention, and the structure of this lesson plan more than doubled the iterations while also increasing student engagement. It was also a lot more interesting for me–a win-win-win!

Filed under Uncategorized

My side gig.

I have a guest post today over at the GradHacker blog. It talks about the benefits I’ve experienced from building up a small copy editing gig and why I think grad students would benefit from spinning their academic work into a paying freelance job.

Filed under Uncategorized